This is a response to last week’s blog post One Database To Rule Them All: The Invisible Content Cartel that Undermines the Freedom of Expression Online. [Ed.]

By Tech Against Terrorism

Summary

Tech Against Terrorism focusses on providing practical support to the tech sector in tackling terrorist use of the internet whilst respecting human rights. As a key partner to the Global Internet Forum to Counter Terrorism (GIFCT), we mentor companies in how to implement robust and human rights compliant policies and content moderation practices in line with the Tech Against Terrorism Pledge. Much of our work is focussed on developing data-driven tools to support both tech platforms and academic research. Our ambition is to help build scalable solutions that can support platforms of all sizes in a way that encourages transparency by design.

Through our work in developing the Terrorist Content Analytics Platform (TCAP), with support from Public Safety Canada, we are building the world’s first transparency-centred terrorist content tool. The TCAP alerts platforms to branded content associated with designated (far-right and Islamist) terrorist organisations, archives the material, and facilitates discussion between platforms, civil society, and academia to improve classification and moderation of illegal content. In developing the TCAP, we are facilitating swift and accurate action from tech companies in tackling terrorist content on their platforms in a transparent, accountable, and human rights compliant manner. A beta version for tech companies will be released in December of this year, whilst the beta version for academics and civil society will be released in 2021.

Background

On 4 November VOX-Pol republished an article written by Jillian C York and Svea Windwehr from the Electronic Frontier Foundation (EFF) which outlines concerns about the GIFCT’s hash-sharing database. The concerns raised by York and Windwehr can be summarised as follows:

- Reliance on automated solutions to moderate content can lead to incorrectly removing legitimate and legal speech, including documentation of war crimes, which risks having a negative impact on freedom of speech

- Defining “terrorism” is a political undertaking, and the GIFCT hash-sharing database takedowns risk disproportionately affecting Arab and Muslim communities

- There is limited civil society oversight of the GIFCT and the hash-sharing database, and thus the database risks becoming a “content cartel” where online speech norms are developed without sufficient accountability

The concerns raised by York and Windwehr are important. In this piece we detail how we address these concerns in our development of the Terrorist Content Analytics Platform (TCAP).

Scope: preventing undue norm setting of online speech

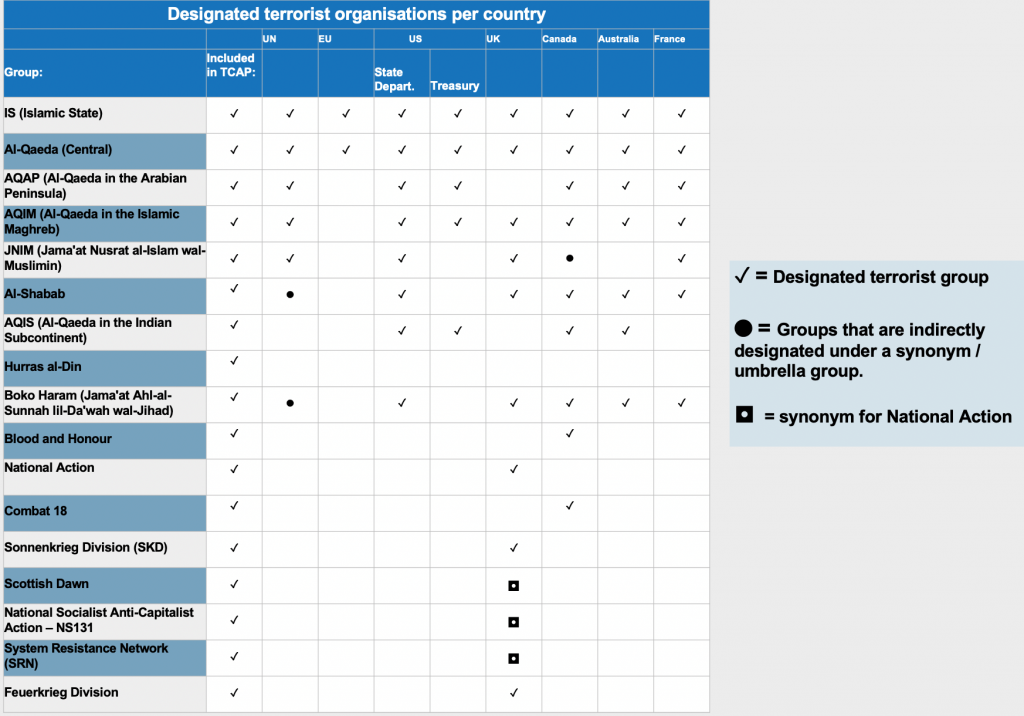

When tackling terrorist use of the internet it is vital that the rule of law is respected and not undermined by extra-legal mechanisms. We are aware that we, as a non-governmental organisation, should not set global norms for online speech. Therefore, we have based the TCAP’s content inclusion policy on existing international, regional, and national designation of terrorist groups. Further, since terrorists largely focus on producing propaganda for strategic communication purposes, we will in the first instance include official and branded content. In its initial version, the TCAP will contain official Islamic State and al-Qaeda (and affiliates) content, and, crucially, official content from designated far-right terrorist groups, including National Action and Blood and Honour.

By grounding the TCAP in the rule of law, we hope to prevent contributing to undue norm-setting of online speech. To help create definitional clarity for tech companies, we further encourage governments to improve and reform existing designation mechanisms based on the rule of law. Instead of developing complex new regulations about “harmful” content, we believe that first further attention should be brought to existing (but under-utilised) resources, such as the formal terrorist designation process.

Accuracy and accountability: preventing incorrect removal of content

Accuracy and accountability are two of our key principles in developing the TCAP. York and Windwehr correctly highlight that reliance on automated content moderation tools can lead to wrongful removal of content. To prevent this, we will implement a rigorous verification process in which an academic advisory board will verify that content meets the threshold for inclusion. Anyone with access to the TCAP can also share their views on the classification of the content. Likewise, platforms will themselves be able to see what action (or not) has been taken by other platforms. Over time, we will encourage platforms to annotate notable content moderation decisions in order to provide more transparency about how respective Terms of Service policies are implemented. In doing so, we encourage platforms to create content moderation policies (and practices) that are transparent by design and thereby support knowledge-sharing across the tech industry.

Crucially, the TCAP will provide civil society and experts with visibility of flagged content hosted on the platform and subsequent content moderation decisions by companies. There will be an appeals process in place where users can report content that they think has been wrongfully included, and GDPR will be supported by the platform’s functionality. Lastly, we believe that the TCAP can support the archiving of content removed by tech companies under their counterterrorism policies, and thereby provide an evidence base for cases involving terrorist offences, war crimes, and human rights abuses – as was suggested by Human Rights Watch in a recent report.

Tech platform autonomy: ensuring independent company decision-making

Our mission is to support tech companies in tackling terrorist use of the internet, not to tell them what to do. Therefore, any alerts shared with tech companies via the TCAP are on an advisory basis only. These alerts will provide relevant context about the content as well as groups and their designation status. Such alerts will empower tech companies to swiftly make independent and informed decisions about content removal on their platforms. Further, tech companies will be able to examine all content stored on the site to help inform their content moderation decisions. We believe that such tech company autonomy will provide another safeguard against “content cartel creep”.

Inclusion of civil society in all stages of the process

Civil society feedback is crucial in ensuring that the TCAP is developed in a transparent and accountable manner. Last year, we completed a public online consultation process seeking insights from civil society groups, tech companies, and academics to better understand what steps we should take to avoid some of the risks highlighted by York and Windwehr. Findings from this process are available in a report published earlier this year. However, the consultation process has not ended, as we continue to speak to civil society actors and experts, including via our monthly “Office Hours”.

We believe it is critical that data-driven solutions to tackle online terrorist content are developed from the outset with appropriate mechanisms to uphold human rights, freedom of expression, transparency, and accountability. Such systems at the structural and systematic level need to support these vital checks and balances – these mechanisms cannot be an afterthought. The TCAP will archive content so that those with a need to know have access and can share their views on the content being identified. All we will ask is for users of the TCAP not to share content outside of the platform as we believe in most cases this provides free publicity to terrorists.

With the TCAP, we aim to ensure that we provide the support that smaller tech companies need whilst ensuring that we do our part to help keep the internet open, vibrant, and free. To find out more about how you can join us with this mission please reach out to us via our Twitter handle @techvsterrorism.

Tech Against Terrorism is a public-private partnership that supports the tech industry, and in particular smaller platforms, in tackling terrorist use of the internet whilst respecting human rights through open-source intelligence analysis, mentorship, and operational support. Tech Against Terrorism works closely with the UN and the Global Internet Forum to Counter Terrorism.