By Tom Thorley & Erin Saltman

This article summarises a recent paper published in Studies in Conflict & Terrorism that forms part of a special issue on the practicalities and complexities of (regulating) online terrorist content moderation. The special issue contains papers that were presented at Swansea University’s Terrorism and Social Media Conference 2022.

Technical tools and how trust and safety teams use them to identify, review, and remove terrorist and violent extremist content online have significantly evolved in recent years but we still lack a comprehensive understanding of how to combine these tools with human expertise to optimize what both do best. Tech platforms today often deploy layered or hybrid models for detecting this content and determining actions to take in line with their respective policies and terms of service. These models and approaches often combine different signals about the user-generated content to ensure greater accuracy in identifying threats and possible content requiring an urgent review. Processes usually include internal reviews for accuracy in when to action or remove content, and can even deploy messaging to users in the form of counter or alternative narratives to counter violent extremist ideologies.

In order to enhance our collective understanding, the Global Internet Forum to Counter Terrorism (GIFCT) developed demonstrative approaches for combining terrorist and violent extremist signals and tooling in order to assess how best to decrease the likelihood of false/positives and increase higher accuracy in surfacing terrorist and violent extremist content (TVEC) online related to incident response.

Most testing by researchers outside of tech companies has relied largely on testing one tool or algorithm at a time for detecting harmful content, either in controlled environments or in the public online ecosystem with varying results – discussed in GIFCT’s longer report. Instead, GIFCT’s methodology layers behavioural and linguistic signals in an attempt to more accurately and proactively surface TVEC relating to potential real-world threats from terrorist and violent extremist groups across both Islamist extremist and white supremacy related ideologies.

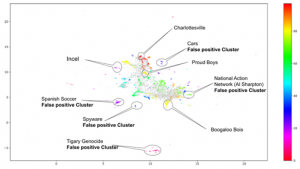

First, GIFCT’s methodology starts with a keyword list of identified terms based on research from subject matter experts, an often-used entry point for using technical tooling to detect terrorist content online. The methodology then makes use of a machine learning algorithm called Bidirectional Encoder Representations from Transformers (BERT) to generate a feature vector representation of posts and other words within a sentence, UMAP for dimension reduction and HDBSCAN for clustering before applying TFIDF to determine the words that best summarize all posts in a topic cluster (for ease of a human interpreting the cluster). The below is an example of the topic cluster diagram mapping topics from posts containing keywords from white supremacy related keywords resulting in clusters that can be labeled with distinct topics to differentiate between posts that are relevant versus clear false/positives.

This approach can be further enhanced by layering multiple keyword-lists. In this research, keywords related to specific terrorist and violent extremist groups were combined with keywords related to ongoing violent attacks and incidents as a second layer to narrow results towards credible threat material. This research shows where layered signal methodologies can decrease false/positive rates for surfacing terrorist and violent extremist content but also highlights how difficult accurate proactive work in near real-time for crisis and incident response can be in the online space. It also shows where third party researchers might be limited in testing methods without access to fuller datasets that platforms have.

Further information on this methodology, including its evolution and lessons-learned in its development are available in the full paper. The complete paper further explores a number of technologies and approaches used by tech companies in the continued effort to prevent terrorists and violent extremists from exploiting digital platforms as well.

The deployment of moderation tools, whether through more basic algorithms or complex machine learning, should be done with care and oversight of potential unintended consequences. Tech companies that GIFCT is familiar with often have engineering teams and data scientists that work with product policy teams, legal oversight mechanisms, and ethical review teams when developing and deploying algorithms that impact user experience and data collection on a platform. Tools can help companies get to the scale and speed necessary for enforcing their policies on a global scale, however, this should not be at the cost of significant accuracy errors that impede on legitimate content or potentially affect marginalized, vulnerable or persecuted populations.

Ethical and human rights considerations are important to define parameters for the deployment of new or advanced tooling that assist moderation processes. However, we currently lack globally recognized parameters to guide tech companies in practical terms about how to prioritize rights when conflicts and competing priorities emerge, or practical steps to take when assessing an algorithmic tool or process. Absent these parameters, several frameworks and recommended guidelines exist to assess ethical and human rights considerations. Focused on companies’ applications of policies, the UNHR’s Guiding Principles on Business and Human Rights is a bedrock for tech companies. Looking specifically at guidance for the deployment of artificial intelligence tooling, the Council of Europe developed a series of recommendations for tech companies, including lists of Do’s and Don’ts around Human Rights Impact Assessments. In the deployment of technical solutions for countering terrorism and violent extremism on platforms, the GIFCT Technical Approaches Working Group (2020 – 2021), produced a report led by Tech Against Terrorism, which included a section about protecting human rights in the deployment of technical solutions. The paper noted four primary areas of concern:

- Negative impacts on freedom of speech from the accidental removal of legitimate speech content, particularly affecting minority groups.

- Unwarranted or unjustified surveillance.

- The lack of transparency and accountability in the development and deployment of tools.

- Accidental deletion of digital evidence content that is potentially needed in terrorism and war crime trials.

Ultimately, guiding principles for tech companies in content moderation practices and data collection can lean into measures deemed as necessary, lawful, legitimate and proportionate, and action taken to remove content should be tied to a defined and defendable threat.

There are some key takeaways and lessons learned from this initial tech trial, which looked to combine behavioral signals based on keyword lists to proactively surface terrorist and violent extremist incidents online. While using singular behavioral indicators are unhelpful in surfacing terrorist or violent extremist activity or content, combining keywords and layering signals can improve accuracy. There is great potential in combining and layering behavioral signals for proactive hybrid models of threat detection but even with the emergence of generative AI and LLMs like GTP-4 language, human-led subject matter expertise remains important in ensuring quality oversight.

GIFCT looks forward to continuing its tech trials and working with the technology sector and relevant stakeholders in advancing efforts to counter terrorists and violent extremists from exploiting technologies.

Tom Thorley is the Director of Technology at the Global Internet Forum to Counter Terrorism (GIFCT) and delivers cross-platform technical solutions for GIFCT members.

He worked for over a decade at the British government’s signals intelligence agency, GCHQ, where Tom specialized in issues at the nexus of technology and human behaviour. Tom spent five years working in the US Government, Military and Intelligence agencies to coordinate intergovernmental relationships and providing expert consultancy on cyber issues, disinformation, technology strategy and operational planning. Prior to his deployment to the US, Tom built and operationalized Data Science teams to inform operations and discover threats, particularly focused on counter-terrorism.

As a passionate advocate for responsible technology, Tom a member of the board of the SF Bay Area Internet Society Chapter is a mentor with All Tech Is Human and Coding It Forward and also volunteers with America On Tech and DataKind.

Tom is a graduate of the University of Liverpool (BA) and the University of Bath (PgC).

Dr. Erin Saltman is the Director of Programs and Partnerships at GIFCT. She has worked in the technology, NGO, and academic sector building out counterterrorism strategies and programs internationally. Dr Saltman’s background and expertise includes both white supremacy and Islamist extremist processes of radicalization within a range of regional and socio-political contexts. Her research and publications have focused on the evolving nature of violent extremism online, youth radicalization, and the evaluation of counterspeech approaches.

She was formerly Meta’s Head of Counterterrorism and Dangerous Organizations Policy across Europe, the Middle East, and Africa. She also spent time as a practitioner working for ISD Global and other CT/CVE NGOs before joining GIFCT. Dr Saltman is a graduate of Columbia University (BA) and University College London (MA and PhD).

Image Credit: PEXELS

Want to submit a blog post? Click here.