By Lorand Bodo

In recent years, governments and companies have had to respond to the phenomenon of terrorists and other violent extremists using the Internet, especially social media platforms, to propagate their messages and as a tool for radicalisation. For example, the UK government recently proposed to tighten the law concerning the viewing of violent extremist content online, to criminalise repeatedly viewing or streaming terrorist material online. And major global technology companies, like Facebook, Microsoft, and Twitter have created a Global Internet Forum to Counter Terrorism to explore new ways to frustrate the online activities of terrorists and violent extremists.

One good example of the challenges involved in this effort is the chequered history of social media platforms suspending user accounts and removing content associated with terrorism or violent extremism. In March 2017, for example, Twitter announced that it had suspended over 500,000 accounts since the middle of 2015. Researchers at the VOX-Pol Network of Excellence have analysed the impact of Twitter’s takedown activities in the context of the Islamic State (IS): The findings suggest that Twitter has over time become a less permissive operating environment for IS and its sympathisers.

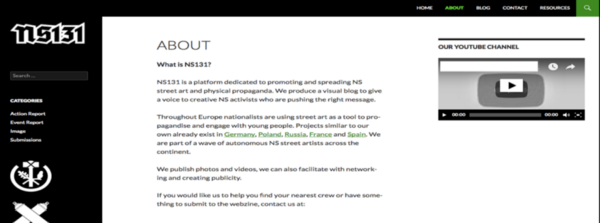

While this holds true for IS, we were keen to see if the same assessment applies to the social media activities of extreme right-wing (XRW) groups in the UK, as the danger posed by the extreme right is currently growing. One of the most prominent XRW groups in the UK is National Action (NA), which became the first extreme right-wing group to be proscribed as a terrorist organisation in the UK last December. The UK Home Office subsequently identified two alternative names for the group, Scottish Dawn and National Socialist Anti-Capitalist Action (NS131), and recently extended the prohibition to cover both these aliases.

Of course, the legal proscription of an extremist group does not inevitably or immediately lead to the total disappearance of its online propaganda. This is clearly apparent in this case, with both the official websites for Scottish Dawn and NS131 still available online.

One reason why both of these websites remain online, despite both groups having been banned in the UK, is most probably that they are hosted on servers overseas, and are therefore subject to a different jurisdiction. Using servers located in the United States, for example, could provide a ‘safe haven’ for the publications of violent extremists because US-hosted servers are covered by First Amendment free speech protections. Put differently, the websites of Scottish Dawn and NS131 could continue to be publicly accessible unless the respective hosting companies choose voluntarily to remove them from their servers. An example of this kind of discretionary action occurred in the recent case of The Daily Stormer, a Neo-Nazi website that had its domain registration cancelled by GoDaddy, and subsequently by other non-US companies to which it attempted to switch its domain registration. This case illustrates the complexity of the challenge of removing extremist content from the Internet. As the Internet is global, it is unrealistic to expect progress without international cooperation, including both governments and the private sector.

Returning to the UK, we quickly explored National Action’s online activities, especially its social media presence, since it was banned in December 2016. What we want to emphasise in this blog post is one aspect of this activity, on Twitter. Whilst it was easy to find National Action’s old Twitter handle, what surprised us was the fact that we could still easily access the content posted by this account, almost a year after the group was banned and despite the account having been withheld by Twitter. This raises a question about the effectiveness of Twitter’s effort to remove National Action-related content from its site: why is this content still available on Twitter?

The answer is that National Action’s account was not deleted from Twitter, but was instead withheld from Internet users located in France and the UK. This explains why we were still able to access this content: we visited the account using a virtual private network (VPN), in this case using an Australian IP address. The National Action account has been inactive since last December — the date of NA’s proscription by the UK government — but its historical content, including 396 tweets, is nevertheless accessible to its 3,157 followers and basically anyone else, provided they access the Internet from an IP address located outside of the UK or France, e.g. by using a browser-extension VPN, which takes less than a minute to install. Withholding access to the site might bring Twitter into compliance with UK legislation, but deleting the account from its website would seem to be a necessary extra step to properly remove access.

This example suggests that further research into XRW online activities, and social media companies’ efforts to restrict these activities, would be worthwhile. It highlights the challenges to effective counter-extremism efforts online, the crucial role of the private sector, and the limited practical value of certain techniques to restrict access to extremist content. With a wide spectrum of national regulatory environments and the ubiquitous availability of VPNs, the idea of blocking content based simply on the apparent location of an Internet user’s IP address is a fairly ineffective way of countering the accessibility of extremist content online.

This article was written by Lorand Bodo, a researcher at Ridgeway Information focusing on open source intelligence (OSINT) and online extremism. You can find him on Twitter @LorandBodo. This post was edited by Dr Joe Devanny, programme director for security at Ridgeway Information. You can find him on Twitter @josephdevanny.

This article was originally posted on Medium.com on 13 October, 2017. Reposted here under Creative Commons License.