By Mark Dechesne

At a time when world news headlines are dominated by Covid-19, we must not forget that for the past decade, the nations of Europe have been plagued by another fast-spreading and often deadly epidemic: the circulation of socially corrosive, extremist language via social media.

This type of social media communication has helped ISIS to establish its reputation as a significant actor able to mobilise tens of thousands of young Muslims to travel to Syria, join ISIS, sympathise with its cause or act on its behalf. We have also observed the rise of online Right-wing extremist rhetoric conveying a message of racial superiority, delegitimization of democratic institutions, and intolerance. Significant hate and terrorism related incidents have been linked to prior social media use.

The need to refine policies to counter online extremism remains

The global effort to counteract the ongoing ISIS social media offensive and provide a swift response to terrorist attacks such as that in Christchurch in New Zealand, have focused on curbing online hate speech and radical messaging. Many of these measures pertain to the crackdown of incitement to hatred and violence. Indeed, the EU recognises the online social media sphere as an integral part of the world of violent extremism and has put measures in place to enable a robust response to online propagation of hatred and incitement to violence.

With the commitment of social media companies such as Twitter and Facebook, this has led to a considerable reduction of explicit extremist content on the internet. But it has not necessarily eradicated the problem. Extremists have found new digital environments and new ways to spread their hateful message; messages that were initially shared via Twitter or Facebook can be seen now on Telegram or in discussion fora such as 8Chan. And communication that remains on popular platforms have adopted new, less explicit forms. In this latter sense, the new extremism on platforms such as Facebook and Twitter can be considered, to borrow a contemporary term, asymptomatic – it is social media discourse that fails to qualify for prosecution by law enforcement or banning by social media companies but espouses a message that promotes intolerance, polarisation and, potentially, inspires individuals at risk to engage in extremist acts.

DARE identifies extremism under the radar of law enforcement and social media’s standards

In an effort to understand contemporary radical social media discourse, a group of researchers from the EU funded project Dialogue about Radicalisation and Equality (DARE) have conducted extensive analysis of what was labelled ‘Right-wing extremist’ and ‘Islamist extremist’ discourse on Twitter in the timeframe from 2010 to 2019. The research concerned samples from Greece, France, Norway, The Netherlands, the UK, Germany and Belgium. Twitter accounts in the sample where included on the basis of characteristics such as militarism, support for violence, conspiracy thinking, anti-system attitudes, among other characteristics. For the selection of Right-wing extremist accounts, anti-Muslim and anti-immigration attitudes where also considered criteria. For the Islamist extremists, we also considered the propagation of fundamentalist views.

Given prior reporting that suggests the widespread presence of extremist discourse on Twitter, it is perhaps surprising that the DARE research project encountered considerable difficulty in identifying accounts for in-depth analysis for both strands of extremism, although especially for the Islamist extremists. This underscores the importance of a careful comparison of sampling methodologies across reports for a broadly shared assessment of the presence of online extremism on Twitter. Across the European countries under consideration, the existence of online extremism was registered, although it may come with a limited and varied set of characteristics and remain under the radar of law enforcement or social media’s own standards to ban hostility and hate on their platforms. In particular, the research registered:

Persistent negativity. One particularly salient characteristic of the online Twitter debates involves an apparent generalized negative attitude. Regardless of the issue that is discussed, the accounts in the sample were far more likely to be against something than for something. This tendency is more salient among the Right-wing extremist accounts studied than among the Islamist extremist accounts. The Islamist extremist accounts discuss Western political matters and Western involvement in the Middle East with negativity but also talk about religious affairs and the Muslim community in a positive manner. For the Right-wing extremist, the negativity not only pertains to immigration or Islam, it extends to a wide range of salient issues in national and European politics. This has potential implications for understanding xenophobia and Islamophobia online. These phenomena may not be the result of negative attitudes towards immigrants or Muslims per se. Rather, the phenomena may be the side effect of the salience of immigration and Islam as current policy challenges. As the attention shifts to other issues, we may also observe negativity in these domains. For instance, in recent months, a surge in sympathy for farmer protests can be observed among some of the Right-wing samples.

Excessive focus on threats and injustices. Regardless of the sample (Right-wing extremist or Islamist extremist) we observed an excessive focus on threats and injustices. For the Right-wing extremist sample, these threats pertain to immigration, ‘Islamisation’, and the gradual devaluation and disappearance of national culture and identity. Among the Right-wing extremists, this leads to an obsession with crimes committed by immigrants, and for Jihadist terrorist attacks. As part of the discourse by Islamist extremists, we note an obsession with discrimination and injustice committed against Muslims in European countries and around the world. In both Right-wing and Islamist extremist discourse, the often graphic depictions of such grievances is used to suggest that collective identity is under threat. The descriptor ‘excessive’ is used here not only because of the graphic display of these injustices, but also because of the suggestion of pervasiveness of these occurrences to imply that violations injustices committed are structural rather incidental.

Perception of the state, education system, and media as a unity that contributes to or fails to address the threats. A pervasive theme in the Twitter debates concerns the perception of the inability of the state to effectively deal with the threats mentioned above. The Right-wing extremist sample attributes this inability in part to the dilution of national political authority as a result of participation in the EU, but also to a focus on political correctness in media and education that is perceived to blindly promote equality regardless of differences, and what is considered a concerted effort by left-wing politicians, the mainstream media, and the education system to cover the true extent of the threat posed by immigration and Islam. The Islamist extremist samples emphasise a double standard that implies that Muslims are judged more harshly and excluded from opportunities, despite societal and administrative claims that they enjoy equal rights.

National history, culture or religion as basis for an alternative to current ruling order. As an alternative to the current state of affairs in society, the samples draw on historical, cultural, or religious references that might be used as an alternative guide for public life. Among the Right-wing extremist sample, we find reference to historical national heroes and to images of a glorious national or European past. A key element in these references is the perceived (sometimes racial) purity that existed and is currently threatened by immigration and ‘Islamisation’. Among the Islamist extremist samples, we find reference to religious scriptures (most notably the Qu’ran) and the actors involved in it, to serve as guidance for a ‘pure’ lifestyle amidst a chaotic and unjust world.

Mockery. This alternative worldview is wrapped in a negative stance and mocking attitude towards representatives of the current ruling order. Representatives of this order, most notably political leaders, judges, and media figures, are derided via caricature and hate speech. Among the Right-wing extremist sample, this derogatory portrayal also includes immigrants and Muslims. For the Islamist extremist sample, there are most notably references to politicians and judges who are perceived as particularly instrumental in applying the perceived double standard. The two samples contain numerous references to violence in their discourse but this violence is portrayed as being directed towards their relative communities and to be evidence of the urgency of the threats faced rather than constituting calls to engage in acts of violence. The relative absence of direct calls to violence could in part be explained by regulatory efforts regarding social media communication.

A growing concern? Trends over time and strength of networks

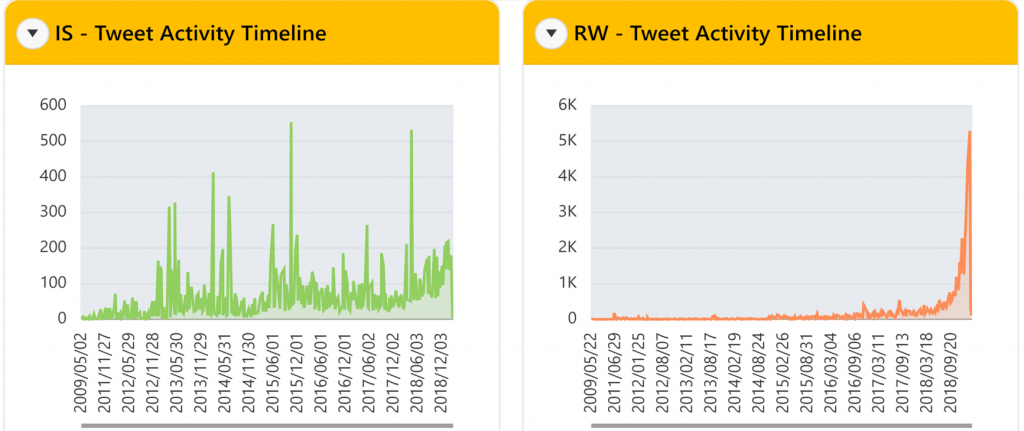

Across the European online cultures investigated, the Right-wing extremist discourse was found to be growing – activity significantly increased over the timeframe studied. For the Islamist extremists, the activity was observed to be scattered across the past decade, with limited signs of recent growth in activity.

For the Right-wing extremists, there appear to be across Europe a relatively small number of highly visible international political leaders that have a considerable impact on the debates (as reflected in the number of likes and mentions), most notably Trump, but also Bolsonaro, Salvini, and Farage. For the Islamist extremist samples, the research failed to identify particularly strong external influencers.

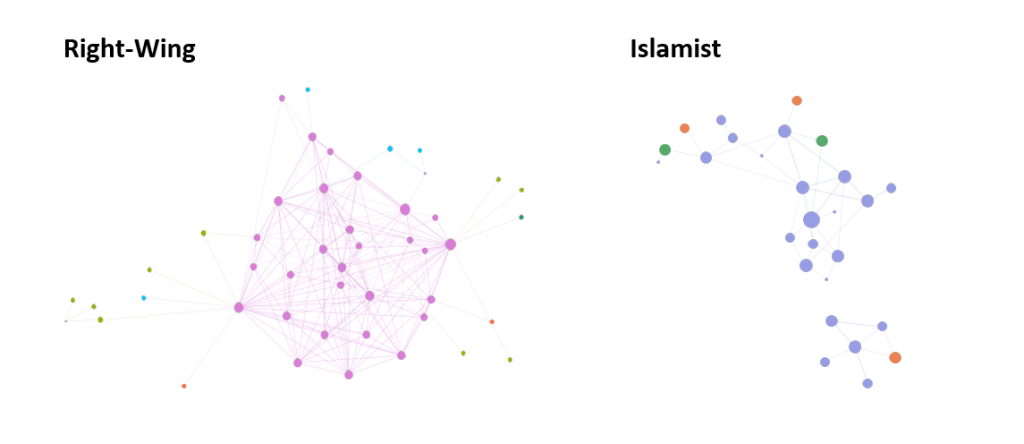

For the Right-wing extremist sample, across countries, the research consistently observed close knit networks with contributors frequently sharing information and liking or retweeting each other’s messages. In this regard, one can consider the Right-wing extremist online world on Twitter a ‘milieu’. In contrast, the research found limited sharing of information, and liking or retweeting among the Islamist extremist samples. This was observed throughout Europe. In terms of the interaction between accounts in the samples, the Right-wing extremists and Islamist extremists appear to be fundamentally different

Gender differences in participation in the online debate were part of the study, uncovering gender differences in the degree of involvement in particular country-specific debates. Gender was also a topic of debate, e.g. of protecting west against gender inequality promoted by Islam (rape, burka), and the gender specific ways in which one shows devotion.

Transmission without transition as an effective policy

To address the challenges that come with the observation reported above, a ‘transmission without transition’ policy is proposed that seeks to balance inclusiveness and prevention of extremist acts. Fundamental is the ambition to recognise inequalities and promoting fairness while creating inclusive, innovative, and reflective societies. This policy will require efforts from both the public and private sectors involved in the facilitation of online communication.

The mere broadening the criteria for message banning may be perceived by the vast majority of community members investigated in the DARE project to be another effort by the “elite” to silence opposing voices and to hide the truth about threats and injustices committed to the community that the tweeters identify with, and in this sense may fuel rage rather than mitigate extremism. At the same time, although it is clear that the vast majority of people exposed to extremist ideas or even contribute to extreme debates will not engage in illegal extremist acts, the extremist ideas themselves can affect at risk individuals to plan and conduct extremist acts. This by itself should be a reason to be cautious about the allowing free availability of extremist ideas on online platforms.

Policy recommendations

1. Improve diagnosis of online extremism

a) Improve the taxonomy of extremist online social media discourse. At present there is a considerable difference in opinion regarding what constitutes extremist discourse and what distinguishes it from non-extremist messaging. All too often, application of the label ‘extremism’ is taken for granted and for many published reports it is unclear what has actually been studied under the heading of ‘extremism’. Policy to address extremism would greatly benefit from a broadly shared taxonomy describing the common characteristics of online extremism and including variation in extent of extremism.

b) Make an effort to understand the person behind online extremism. Often, especially with the fleeting communication and anonymity of the online world, the personal dimension of online messaging is ignored. However, ethnographic research conducted within the DARE project alongside the current research of the online world shows a quite varied picture regarding the motivations behind online involvement and the extent to which the online world affects behaviour in the offline world where extremism can have the most dramatic impact. It is vital to take this variation into account for a profound discussion on the subject matter. To that effect, further research on the impact of the online on the offline is essential.

c) Identify characteristics of individuals at risk of transitioning to extremism following online involvement. Given variation in degree and manner in which the online world impacts the offline world, and the risk of backlashes associated with banning of online contributions, it is imperative to adopt a targeted approach to counter extremism that focuses not only on the content, but also on the impact of the content on individuals at risk of being radicalised by this content.

2. Promote dialogue rather than counternarrative or banning

d) Consider feedback as an alternative to banning. In current policy to counter online extremism, the emphasis is on banning unwanted forms of discourse. Many of the accounts included in the DARE sample express concern about these measures and consider them an attempt by the ‘liberal elite’ to silence deviating voices. At present, online social media communication is either allowed or disallowed. However, there may be in-between options, for instance by providing feedback to the user, without immediately imposing bans. In order to address the observed persistence of negativity, social media platforms may share sentiment scores with accounts to provide an indications of the extent to which they deviate.

e) Perceptions of threat imply anxiety. There are more effective ways to fear management than denial. In addition to persistent negativity, the excessive focus on threats that characterises the investigated samples indicate the presence of anxiety. Research on anxiety management warns against denial as a response. Yet, a focus on the crackdown on extremist content carries this imprint. Alternatives may prove more effective.

f) Promote accountability to mitigate blame games. The tendency of the investigated sample to point to the state, media, and legal and education systems for their alleged inability to recognise and deal with threats poses another risk for relying solely on restrictive measures to curb online extremism. These restrictive measures have been observed to provide another indication that the state and state representatives (in the eyes of many of the sample, this includes the media) are failing in their policies. To address this “blame game”, promoting accountability may prove a more effective strategy than banning. Research shows the promoting accountability (“what can I do?”) may considerably reduce the tendency to blame others. At present, online social media may provide the optimal conditions to escape accountability (e.g. contributors can be anonymous, have multiple accounts, are not required to provide personal information). There is considerable potential for innovation in accountable social media.

g) Take alternative visions seriously, if only for their consequences. An overused sociological axiom states that whatever is perceived to be true can have real consequences. In an effort to ban online extremism a direct denial or devaluation of opinion as fake news or conspiracy may produce counterproductive effects. Dialogue that includes a genuine engagement with, and critique of, visions espoused by extremists, may be most effective in the long run.

h) Consider implementation of educational toolkits. Awareness, courage, accountability, and empathy, i.e. the skills required to promote moderation, need to be acquired through training. The implementation of educational toolkits can facilitate this acquisition.

3. Experiment with diversity

i) Promote online contact between diverging views. The DARE research uncovered for the Right-wing extremist sample a phenomenon that is often observed in online chat; the tendency of contributors to coalesce with likeminded others, culminating in mutual reinforcement and strengthening of initial positions, thus creating a ‘filter bubble’. Although many initiatives show how bringing together with different viewpoints can have constructive effects in the real world, at present online initiatives attempting to do the same are lagging.

Mark Dechesne is Associate Professor in the Faculty Governance and Global Affairs at Leiden University. On Twitter @markdechesne. These policy recommendations are developed from research by the DARE project (Dialogue About Radicalisation and Equality) which received funding from the European Union’s H2020 research and innovation programme under grant agreement no. 693221.

These recommendations were also published on the European Network Against Racism (ENAR) website.