This Blog post is the third—the first is HERE and the second HERE—in a four-part series of article summaries from the EU H2020-funded BRaVE project’s First Monday Special Issue exploring societal resilience to online polarization and extremism. Read the full article HERE [Ed.].

By Amy-Louise Watkin and Maura Conway

Discussions already underway amongst not just academics but also policymakers, law enforcement, and journalists about tech companies’ parts in encouraging polarisation and extremism, were given added impetus by the myriad roles played by online platforms in the January 2021 storming of the US Capitol. The aftermath of those events witnessed a flurry of deplatforming of polarising and extremist users, groups, and movements, and their content.

While much of the existing research has centred around the deplatforming debate, our First Monday article employs the concept of social capital to examine the efforts that a range of tech companies have claimed to take to build resilience and discourage polarisation and extremism on their platforms.

Conceptualising ‘Social Capital’

Social capital is a resource originating in social relations and the creation or maintenance of community- and organisation-based social connections that may be acquired and mobilised by a wide array of social actors (e.g., individuals, companies, countries). Put another way, social capital is the ability to secure benefits or resources through one’s memberships and relationships in social networks.

Social capital has been posited as crucial to the efficient functioning of liberal democracies and a prerequisite to resilience. Social media has been found to create all three types of social capital – bonding, bridging and linking. For a community to be resilient, it is held that a balance of all three forms of social capital must be present.

Bonding social capital (strong ties) refers to relationships with family, friends, and those sharing some other important characteristic (e.g., ideology, religion, ethnicity). Bridging social capital (weak ties) refers to the building of relationships between heterogeneous groups; for example, connections between friends-of-friends or with other people from different social groups. Finally, linking social capital is traditionally the relationship between citizens and authorities, such as the government. It involves trust and confidence in government and institutions.

Social Media and Social Capital

There is a plethora of research investigating whether social media can affect social capital. However, the majority of this research has been survey research of social media users, with the surveys containing indexes specific to the context or domain being researched, and the research being dominated by a focus on Facebook. A lot of the research is around ten-years old now, and with the rapid speed at which platforms evolve, is arguably outdated.

Nonetheless, this body of research revealed that social media networks often take the form of large and heterogeneous collections of weak ties, and although social media can increase all three kinds of social capital, it tends to have the biggest impact on bridging capital. This suggests that social media can expand social networks across different communities and social groups. However, the consequences of this can be both positive and negative (e.g., aiding establishment of hate groups). Unfortunately, little-to-none of this research is explicit as to how these findings could be used to inform policy making across online platforms.

In our First Monday article, we identify the ways in which a range of technology companies incorporate the three main types of social capital (i.e., bonding, bridging, and linking) in their claimed efforts to counter polarisation and extremism and build resilience on their platforms. Our research differs from much of the research on online social capital produced to date in that it is not Facebook-centred, user-focused, or survey-based, but instead focused on the public pronouncements of a range of broadly social media companies.

Our Data

As regards data, we utilised an extensive and under-utilized primary resource: tech companies’ official blog posts, which contain a range of valuable insights into tech companies’ efforts to counter a range of bad actors. Specifically, we collected and analysed blogs posted by three ‘old’ (i.e., Facebook [established 2004], Twitter [established 2006], YouTube [established 2005]) and three ‘new’ companies (i.e., Discord [established 2015], Telegram [established 2013], and TikTok [established 2016]) in the period September 2017 to August 2020 that addressed building resilience, countering polarisation, and/or countering extremism. Together this amounted to 436 posts, which accounted for 30 percent of all the posts made by the six companies on their corporate blogs in the data collection period and resulted in over 300,000 words of text for analysis.

Research Findings

It’s probably relatively unsurprising that closing in on half (41 percent) of all blogs published by Facebook and Twitter in the period studied mention their efforts to counter polarization and extremism. They have had longer to learn about how their platforms are exploited, how to respond to this, and have greater capacity to do so. YouTube’s much lower level of attention to these issues may strike some as more surprising but aligns with Douek’s argument that YouTube is “flying firmly under the radar” and taking a strategy of keeping “its head down and sort of let[ting] the other platforms take the heat”.

The newer platforms’ smaller percentages of blog posts regarding countering efforts may be expected given their much more recent origins and potentially also less knowledge and capacity to make such efforts; also, in the case of Telegram, less willingness to do so. Having said this, despite being newer, TikTok posted significantly more blogs than the two other newer platforms, with many of these being quite lengthy, communicating with their users their efforts to counter polarisation and extremism. This suggests that TikTok is at least keen to be seen as making efforts in this area; also, that platform size, rather than merely age, is an important factor.

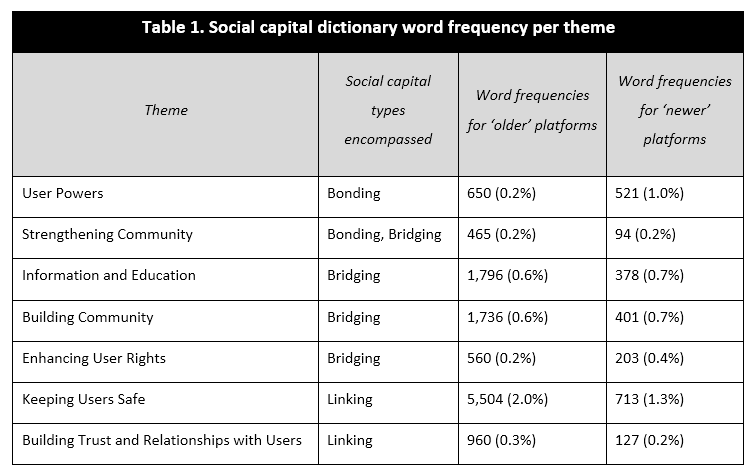

Seven themes emerged from our analysis of the selected Blog posts. The first was ‘granting user powers’, which referred to the many tools that platforms have been implementing to allow users to make more decisions for themselves regarding their user experience. The next was ‘strengthening community’ which focused on the platforms’ efforts to strengthen existing communities on their platforms. The third was ‘the provision of information and education’ which focused on educating users on prohibited content and identifying misinformation. The fourth was ‘building community’ which focused on creating connections and increasing participation, diversity, tolerance and partnerships. The fifth was ‘enhancing user rights’ whereby a narrative of having human rights, civil rights, and free speech at the centre of policymaking and content moderation was put forth. ‘Keeping users safe’ was the next theme and focused on rules, policies and community guidelines, etc. The final theme ‘building trust and relationships with users’ focused on transparency reports, consultations and user feedback as methods of building trust with users.

Table 1 shows the type(s) of social capital associated with each theme and how frequently the words for each theme appeared in the social capital dictionary.

Overall, we found that the platforms have different underlying priorities as regards where they believe their efforts should lie in regards to the identified themes and thus social capital production. This is most likely based on the platforms’ chosen values (e.g., Telegram’s commitment to free speech), the audiences they are trying to attract (e.g., TikTok’s younger audience) and their capacity (e.g., resources, expertise, number of staff, financial turnover, etc.) to undertake such efforts. These differences, particularly the latter, are arguably not sufficiently considered in the context of the regulatory demands increasingly being made on platforms.

The research provides some insight into the ways in which platforms are similar to but also different from one another and how this can feed into their responses to countering bad actors on their sites. It sheds light too on why a one-size-fits-all legislative approach is unlikely to be effective (see Watkin (2021) for further work in this area). Additionally, it could be expected that older platforms would, as a result of having had longer periods of time and a higher capacity to undertake such efforts, have been found to have published about these efforts more than newer platforms. This was only the case across some themes, however. This suggests there could be some benefit from older and newer platforms collaborating and sharing best practices in the realms of resilience-building and countering polarisation and extremism.

The unintended negatives consequences of, on the face of them, good faith initiatives at building social capital are also worth noting. These include creating exclusion and isolation, as well as removing the need to be tolerant of other views (e.g., by users simply removing them from their feeds). Further, there is the potential, particularly with linking social capital, that a failure to secure perceptions of legitimacy or a break of trust could result in worse rather than better user-platform relations. These unintended consequences have the potential to have quite negative outcomes given the earlier mentioned findings that these are circumstances that extremist groups and movements have been known to exploit (Brisson, et al., 2017; Pickering, et al., 2007). Platforms must therefore be more mindful of safeguarding their users from unintended consequences that may arise from any efforts they implement to try to counter polarization and extremism on their sites. Finally, it is recognized that while there is certainly a public-relations element to the blog posts, there is still much value in these large datasets from a researcher perspective.

To read about the research methodology and a full discussion of the findings, check out the full article in the First Monday Special Issue HERE.

Maura Conway is Paddy Moriarty Professor of Government and International Studies in the School of Law and Government, Dublin City University, Ireland; Visiting Professor of Cyber Threats at CYTREC, Swansea University, UK; and the Coordinator of VOX-Pol. On Twitter @galwaygrrl.

Amy-Louise Watkin is Lecturer in Criminal Justice at the University of the West of Scotland. On Twitter @amy_louise_w.

The BRaVE project received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement number 822189. Image credit: pngtree.